Basics to Advance: Unleashing the Power of AWK in Advanced Linux Operations

Introduction

If you are a beginner or intermediate in using Linux, you must know some basic commands like cd, ls, sudo, rmdir etc. And I think these commands are ok but there is a powerful command which user might ignore or don't use due to its toughness or unawareness

When it comes to command-line text processing in Linux, AWK stands out as a versatile and powerful tool. In this article, we'll take a closer look at a basic AWK command and explore its capabilities in extracting specific information from text files.

Scenarios

Before you start learning any command or tool, it's important to understand how it can actually help you in real-life situations, especially in your daily tasks. If something seems fancy but doesn't have a practical use, it might not be worth learning.

Learning should be about gaining skills that make your everyday life easier and more efficient, tackling the challenges you face regularly. So, before diving in, consider whether what you're learning has a meaningful impact on your day-to-day activities.

So that's why am sharing some real use case or scenario where awk command could be really helpful.

Server Log Analysis:

Scenario: Analyzing Apache web server or any web server logs to extract information about the number of requests for each page.

Assuming you have an Apache log file named

access.logwith entries like192.168.0.1 - - [01/Jan/2022:12:00:00 +0000] "GET /page1 HTTP/1.1" 200 1234 192.168.0.2 - - [01/Jan/2022:12:01:00 +0000] "GET /page2 HTTP/1.1" 404 5678 192.168.0.3 - - [01/Jan/2022:12:02:00 +0000] "GET /page1 HTTP/1.1" 200 7890You can use the awk command to extract and count the number of requests for each page

Output

2 /page1 1 /page2

CSV File Manipulation:

Scenario: Consider you have a CSV file named

data.csvwith the following content.Name,Age,Occupation Alice,28,Engineer Bob,35,Developer Charlie,22,Student David,40,ManagerNow, let's say you want to extract and display only the "Name" and "Occupation" columns. You can use the following AWK command:

Output

Name Occupation Alice Engineer Bob Developer Charlie Student David Manager

Password File Analysis:

- Scenario: Extracting and displaying user information from the

/etc/passwdfile.

- Scenario: Extracting and displaying user information from the

Network Configuration Review:

Scenario: Analyzing the output of the

ifconfigcommand to display network interface details.For e.g. ifconfig commands give you this output.

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500 inet 192.168.0.2 netmask 255.255.255.0 broadcast 192.168.0.255 inet6 fe80::a00:27ff:fe8a:e2ab prefixlen 64 scopeid 0x20<link> ether 08:00:27:8a:e2:ab txqueuelen 1000 (Ethernet) RX packets 1501 bytes 1666445 (1.6 MB) RX errors 0 dropped 0 overruns 0 frame 0 TX packets 1275 bytes 96476 (96.4 KB) TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0 lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536 inet 127.0.0.1 netmask 255.0.0.0 inet6 ::1 prefixlen 128 scopeid 0x10<host> loop txqueuelen 1000 (Local Loopback) RX packets 9 bytes 546 (546.0 B) RX errors 0 dropped 0 overruns 0 frame 0 TX packets 9 bytes 546 (546.0 B) TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0By using awk command, you can extract and display network interface details.

Output

Interface: eth0 IP Address: 192.168.0.2 Interface: lo IP Address: 127.0.0.1

Custom Log Parsing:

Scenario: Parsing a custom log format to extract relevant information.

Let's consider a custom log file named

custom.logwith entries like:2022-01-01T12:00:00+00:00 | User=John | Action=Login | Status=Success 2022-01-01T12:15:00+00:00 | User=Alice | Action=Logout | Status=Success 2022-01-01T12:30:00+00:00 | User=Bob | Action=Login | Status=FailureAssuming you want to extract and display the date, user, action, and status information, by using awk you can get the output like this

Output

Date: 2022-01-01T12:00:00+00:00 User: John Action: Login Status: Success Date: 2022-01-01T12:15:00+00:00 User: Alice Action: Logout Status: Success Date: 2022-01-01T12:30:00+00:00 User: Bob Action: Login Status: Failure

These examples demonstrate how AWK can be applied in various real-world scenarios for data extraction, processing, and analysis in a Linux or Unix environment. The flexibility and power of AWK make it a valuable tool for system administrators, developers, and data analysts.

There are numerous use cases where you can use AWK command efficiently without much writing. I believe now we are good to go for learning this thing.

What is AWK?

Awk is a command line utility or program or scripting language or text processing utility that means you give it some text and it can grab certain columns, certain rows, certain fields from the text for you. You can tell it to go search for certain string, patterns in the text and even replace those string patterns with other strings. Its a really powerful program and that's why developers and engineer mostly use all the time.

What is utility?

"As a developer working in big tech companies, i probably overused awk. I used awk everywhere especially in my shell scripting because I'm really comfortable with it and it's one of those programs that once you learn awk, you wonder why you didn't learn it sooner because it's such a powerful program. " by Vishnu Tiwari

AWK is particularly well-suited for text processing and is commonly used in Unix and Unix-like operating systems for tasks such as data extraction, reporting, and text pattern matching. Being a command-line utility, it allows users to efficiently perform these tasks by executing commands in a terminal environment.

Basics - Syntax + Examples

Hey, let's head it to the basics and explore the awk command for printing the columns, to use input and output field separator.

Syntax

awk options 'selection _criteria {action }' input-file > output-file

Examples

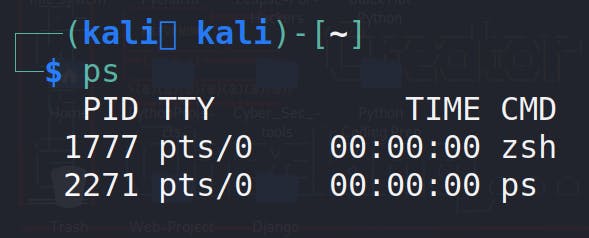

ps

Output

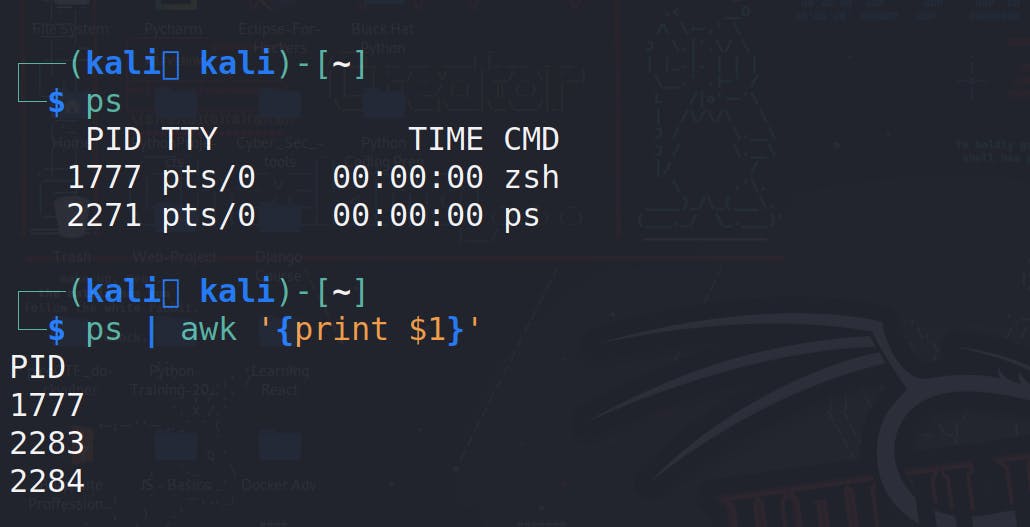

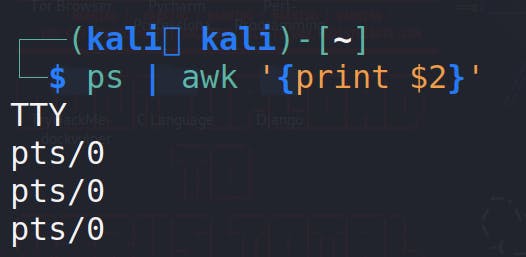

ps | awk '{print $1}'

ps command

ps command in Linux is used to provide information about currently running processes on a system.print $1 - print the first column , change col no by putting the no. after $. like $2 for second column.

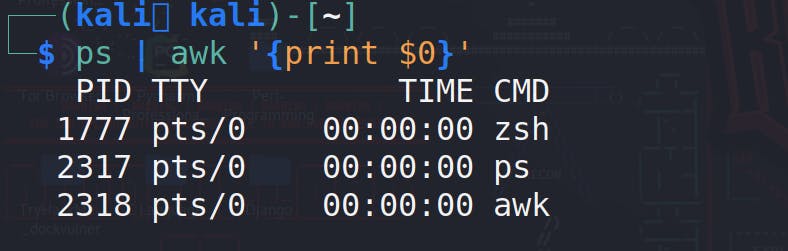

ps | awk '{print $0}'

$0 - simply prints everything just like cat

Output

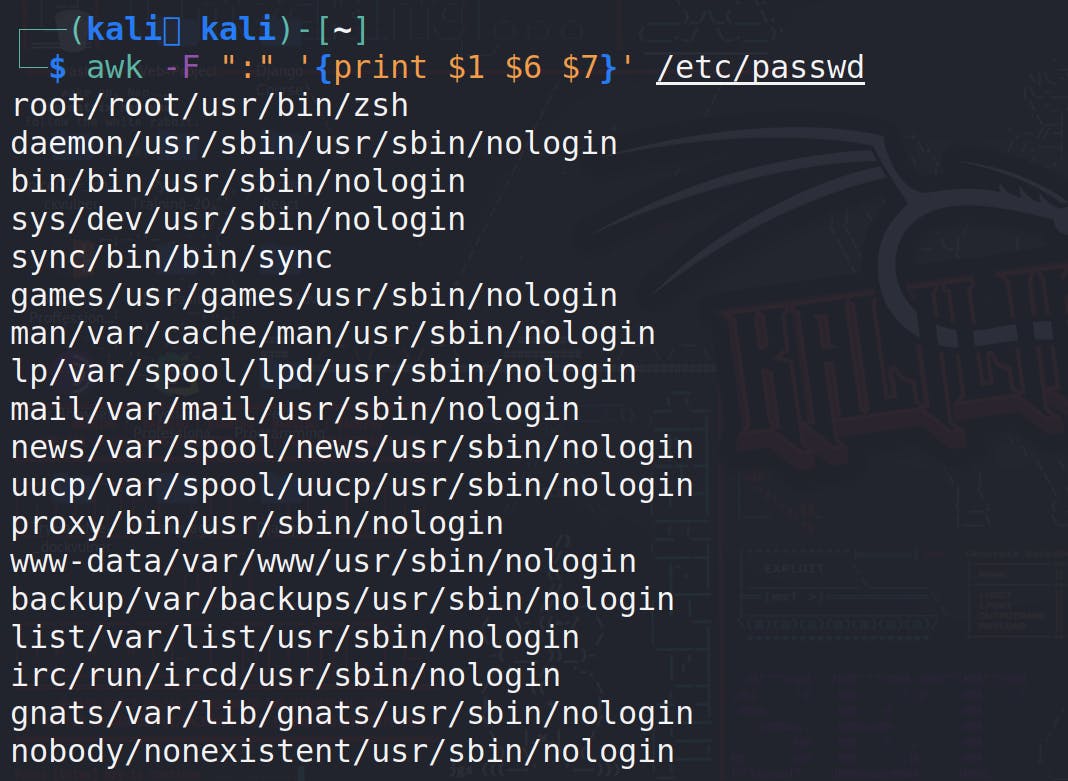

So one of the files that people love to use awk on GNU/Linux system is the /etc/passwd file, that is a file that lists all the users on your Linux system

cat /etc/passwd

the output looks like this,

there's not a lot of spaces to it, there are columns i mean it is separated into columns but the columns they are separated by colons here.

so for printing all the users!

awk -F ":" '{print $1}' /etc/passwd

-F : for providing field separator, by default awk uses spaces as field, it basically splits the column by ':'.

if you want to print multiple columns, add the column no.

awk -F ":" '{print $1 $6 $7}' /etc/passwd

its not very readable because we didn't tell it to separate the columns with spaces or colons or anything. We told it, hey print 1,6 and 7 column.

awk -F ":" '{print $1 "\t" $6 "\t" $7}' /etc/passwd

\t

In the output, we can see its showing tab spaces after all column and it's much more readable that way.

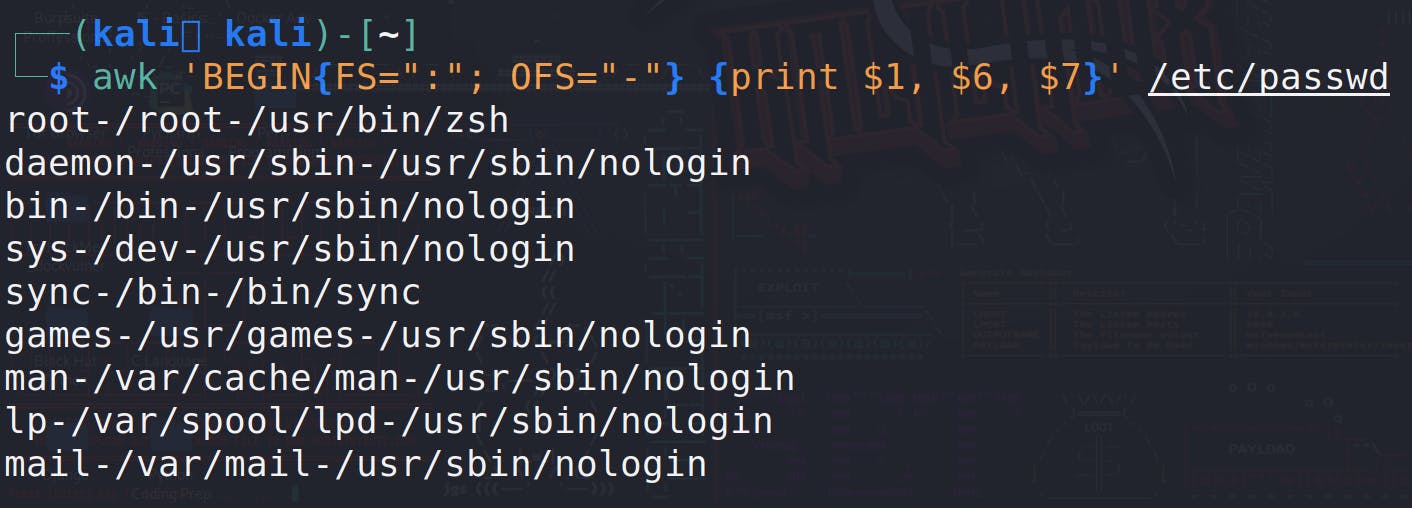

Now other than specifying a field separator to search for and use you know to determine what the columns are, you can actually print out the field separator as well and you can tell it to change the field separator to a different character as part of the output. you can do all this by using this command.

awk 'BEGIN{FS=":"; OFS="-"} {print $1, $6, $7}' /etc/passwd

BEGIN{FS=":"; OFS="-"}: This part of the command is executed before processing any input lines. It sets the input field separator (FS) to a colon (:) and the output field separator (OFS) to a dash (-).

Intermediate - AWK for Daily + Official Use

In this level, we going to upgrade a one level up from the basics and learn about patterns

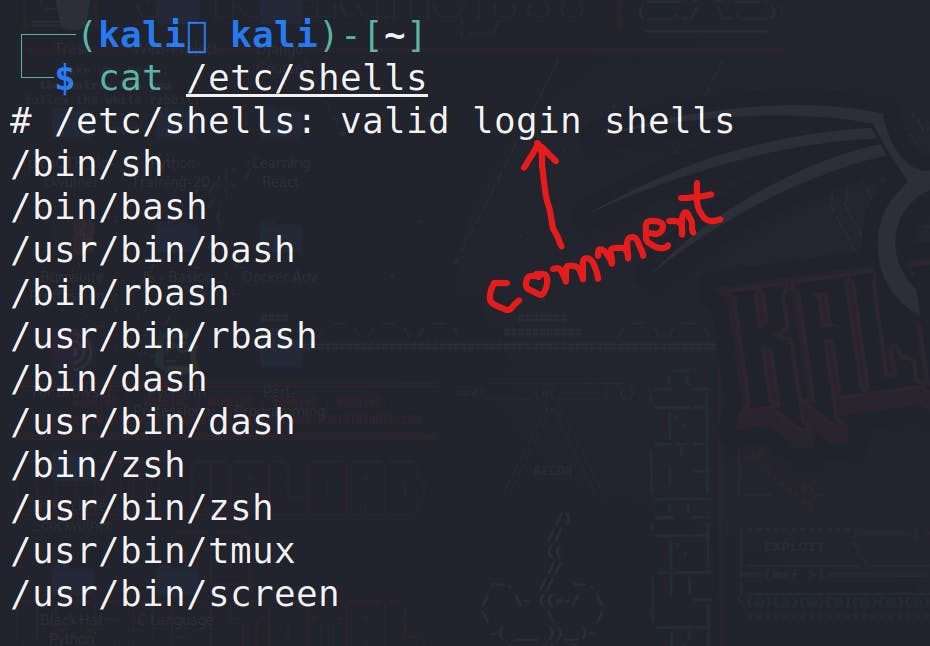

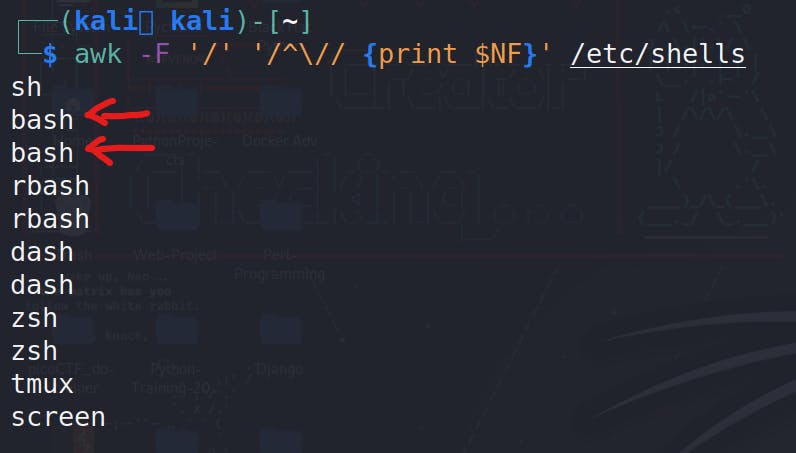

- if you want to get the last column, you can use $NF for printing the last column , for example , you want to print all the shells present in your machine

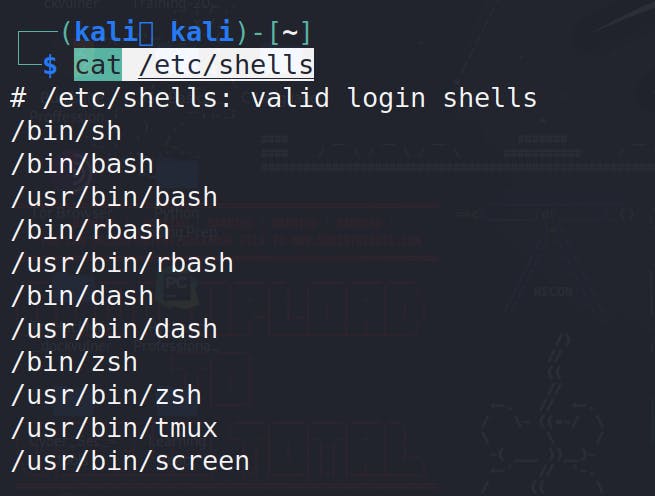

cat /etc/shells

As you can see in the output, the first line is a comment, which is not a valid shell. Therefore, we only need the lines that start with a forward slash '/'. To achieve this, we can use a command like the one provided.

awk -F '/' '/^\// {print $NF}' /etc/shells

// - Anything inside these two forward slashes (//) will be used by AWK to search for patterns.

^ - it is the anchor, used to Indicates the beginning of the line

\/ - uses a backslash to tell AWK that the next character, a forward slash, is not a closing slash.

But in the output, you can see duplicate entries, which don't look good. We can use 'uniq' to specify that we don't need duplicates.

awk -F '/' '/^\// {print $NF}' /etc/shells | uniq

for sorting

awk -F '/' '/^\// {print $NF}' /etc/shells | uniq | sort

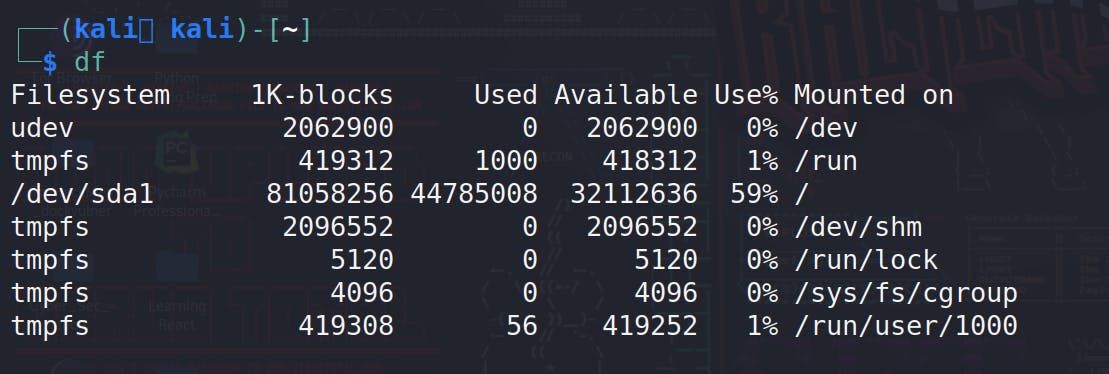

And let's run the 'df' command because it is another common command that provides nice columned information, and people often enjoy using AWK on its output

df

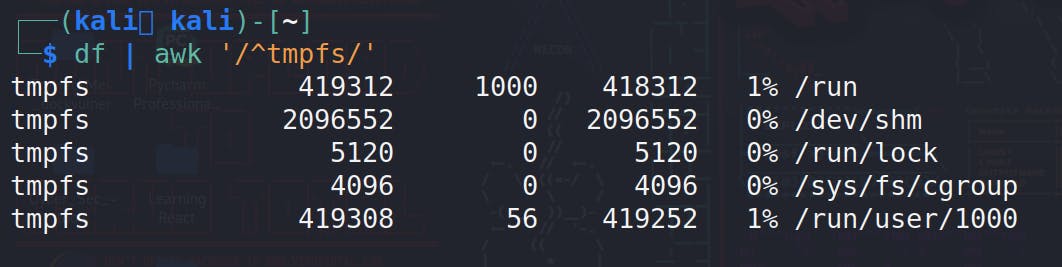

for printing the only tmpfs system,

df | awk '/^tmpfs/'

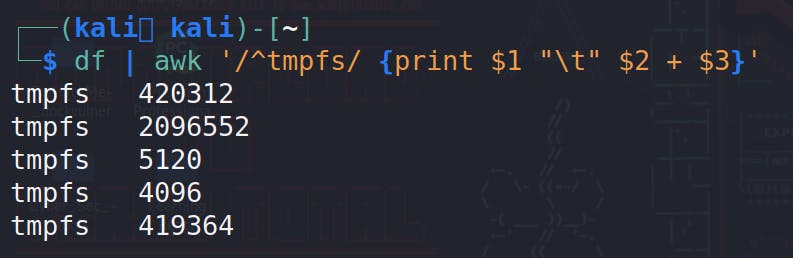

You can also perform operations in the columns like addition, multiplication, division etc.

df | awk '/^tmpfs/ {print $1 "\t" $2 + $3}'

You can also perform operations based on certain conditions

cat /etc/shells

For printing a line less than 8 characters

awk 'length($0) < 8' /etc/shells

for printing shell less than 5 character

Advance - AWK For Pro Developers

In this level, we going to upgrade a one more level up from the intermediate and learn about if/else, loops etc.

if / else in awk

Syntax

awk '{

if (condition) {

print $1 ";

} else {

print $2;

}

}' input_file

Example

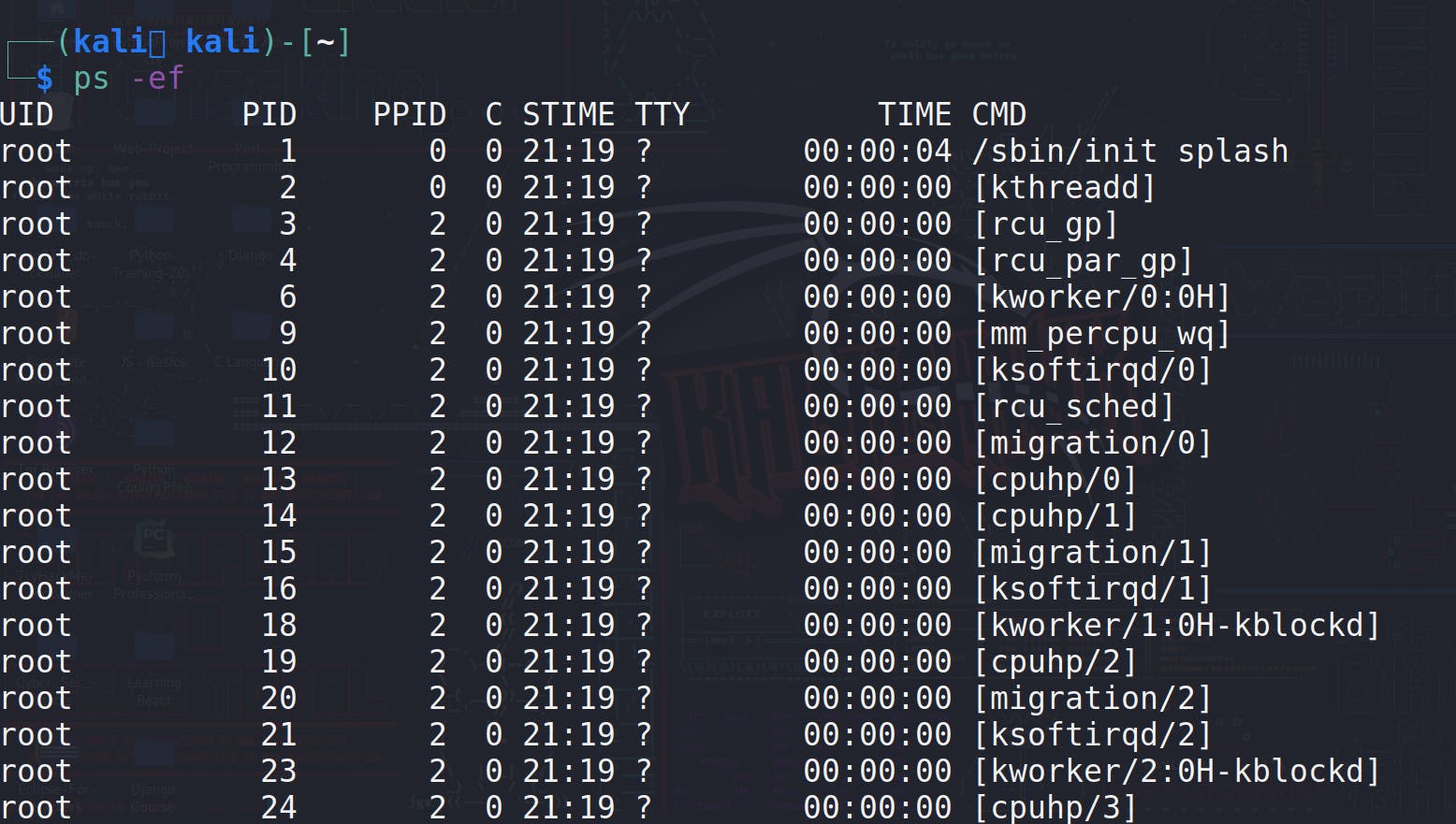

ps -ef # For printing all the process in your system

now we can check if the process is kworker . for printing all the kworker process, we can do

ps -ef | awk '{if ($NF~ /kworker/) print $0}'

Now we can also distinguish that which is a kworker process or which one is regular by using else.

ps -ef | awk '{if ($NF~ /kworker/){ print $NF "\t kworker process"} else {print $NF "\t regular process"}}'

For loop

Since, awk is an scripting language we can also use loops, In AWK, there are two main types of loops: the for loop and the while loop.

awk '{

for (i = 1; i <= 5; i++) {

print "Number:", i;

}

}' input_file

If you use this syntax, you need to mandatorily pass a input file, it basically run the loop for each line

for just performing the operations without passing the input file, you can use BEGIN.

ifconfig | awk '/^[a-zA-Z]/{interface=$1; next} /inet addr:/{print "Interface:", interface, "IP:", $2}'

while loop

In AWK, you can use a while loop to repeatedly execute a block of code as long as a certain condition is true. Here's a simple example of using a while loop in AWK

awk '{

i = 1;

while (i <= 5) {

print "Number:", i;

i++;

}

}' input_file

In this example:

i = 1: Initialization of the loop variableito 1.while (i <= 5): The condition for the loop to continue as long asiis less than or equal to 5.print "Number:", i: Code inside the loop that prints the current value ofi.i++: Incrementingiby 1 in each iteration.

else if

! In AWK, you can use if and else if statements to implement conditional logic. Here's an example:

awk '{

if ($1 > 10) {

print $1 " is greater than 10";

} else if ($1 == 10) {

print $1 " is equal to 10";

} else {

print $1 " is less than 10";

}

}' input_file

In this example, the AWK script reads input from input_file (you can replace it with your actual file name), and for each line, it checks the value in the first column ($1). Depending on the value, it prints a different message.

Explanation:

if ($1 > 10): If the value in the first column is greater than 10, execute the corresponding block of code.else if ($1 == 10): If the value is equal to 10, execute this block of code.else: If none of the above conditions are true, execute this block.

You can adjust the conditions and actions inside the blocks to suit your specific requirements.

Some Common Useful Commands For Daily Use

substr

The

substrfunction in AWK is used to extract a portion of a string. Its basic syntax is:substr(string, start[, length])string: The input string from which you want to extract a substring.start: The position in the string where extraction begins. The position is 1-based.length(optional): The number of characters to extract. If omitted, it extracts the substring from the start position to the end of the string.

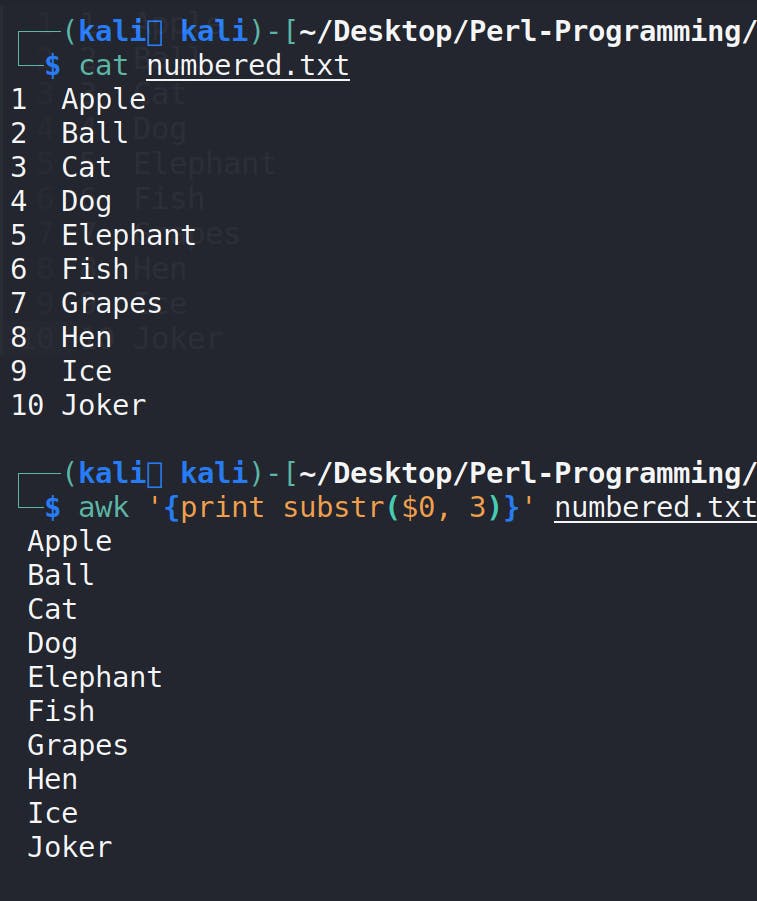

for example , you have a file and you want to use substr on it, the actual use is depend on the layout of the file , but for demo see this example

awk '{print substr($0, 3)}' numbered.txt

match, RSTART and RLENGTH

In AWK, the

matchfunction is used to search a string for a specified pattern and sets the values ofRSTARTandRLENGTHto the starting position and length of the matched substring, respectively. The basic syntax ofmatchis as follows:match(string, regexp)string: The input string where you want to search for the pattern.regexp: The regular expression pattern to search for in the string.

Here's an example:

awk 'match($0,/o/) {print $0, "Has O character at index", RSTART, "with length", RLENGTH}' numbered.txt

NR

In AWK,NRis a built-in variable that represents the current record (line) number being processed. It is automatically incremented by AWK as it reads each input line.NRis especially useful when you want to perform actions based on the line number.df | awk 'NR==6, NR==8 {print NR".", $0}'

for checking the line count of any file!

df | awk 'END {print NR}'

For checking IP Addresses

Usingifconfigwith AWK can be useful for parsing and extracting specific information about network interfaces.ifconfigprovides details about the network configuration on a system, and AWK can help filter and format this information according to your needs.ifconfig | awk '/^[a-zA-Z]/ {print "Interface: " $1 } /inet/ {print "IP Addrress:" $2}'

Conclusion

In conclusion, our exploration of AWK has provided a comprehensive understanding of this powerful text processing tool. From its fundamental syntax to advanced features, we've delved into the intricacies that make AWK a versatile and indispensable asset in the realm of data manipulation and analysis.

Throughout the article, we've uncovered how AWK can be applied in various real-world scenarios, demonstrating its utility in tasks ranging from simple text processing to more complex data transformations. The practical examples and use cases serve as a guide for both beginners and experienced users, showcasing the flexibility and efficiency of AWK in handling diverse text-based challenges.

As we bring this AWK journey to a close, I extend my gratitude for your dedication in navigating through the intricacies of this scripting language. The knowledge gained here lays the foundation for enhanced productivity and problem-solving capabilities. Whether you're a newcomer or a seasoned AWK enthusiast, may the skills acquired pave the way for seamless text processing endeavors.

That's a wrap on this article, where we journeyed from AWK basics to advanced ninja moves and explored its everyday applications. Kudos for sticking with it till the end! Much love and catch you at the next article. Stay sharp and keep rocking that tech game! ✌️

Thank you again for your time and commitment. Here's to harnessing the full potential of AWK in your future endeavors. Until our paths cross again in the realm of knowledge exploration, stay curious and keep scripting!